Parallel Shells: distributing split-merge with ClusterODM

Code/community sprints are a fascinating energy. Below, we can see a bunch of folks laboring away at laptops scattered through the room at the OSGeo’s 2019 Community Sprint, an exercise in a fascinating dance of introversion and extroversion, of code development and community collaboration.

A portion of the OpenDroneMap team is here for a bit working away at some interesting opportunities. Tonight, I want to highlight an extension to work mentioned earlier on split-merge: distributed split-merge. Distributed split-merge leverages a lot of existing work, as well as some novel and substantial engineering solving the problem of distributing the processing of larger datasets among multiple machines.

Image of the code sprint.

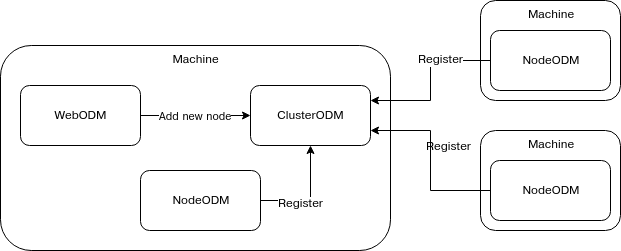

This is, after all, the current promise of Free and Open Source Software: scalability. But, while the licenses for FOSS allow for this, a fair amount of engineering goes into making this potential a reality. (HT Piero Toffanin / Masserano Labs) This also requires a new / modified project: ClusterODM, a rename and extension of MasseranoLabs NodeODM-proxy. It requires several new bits of tech to properly distribute, collect, redistribute, then recollect and reassemble all the products.

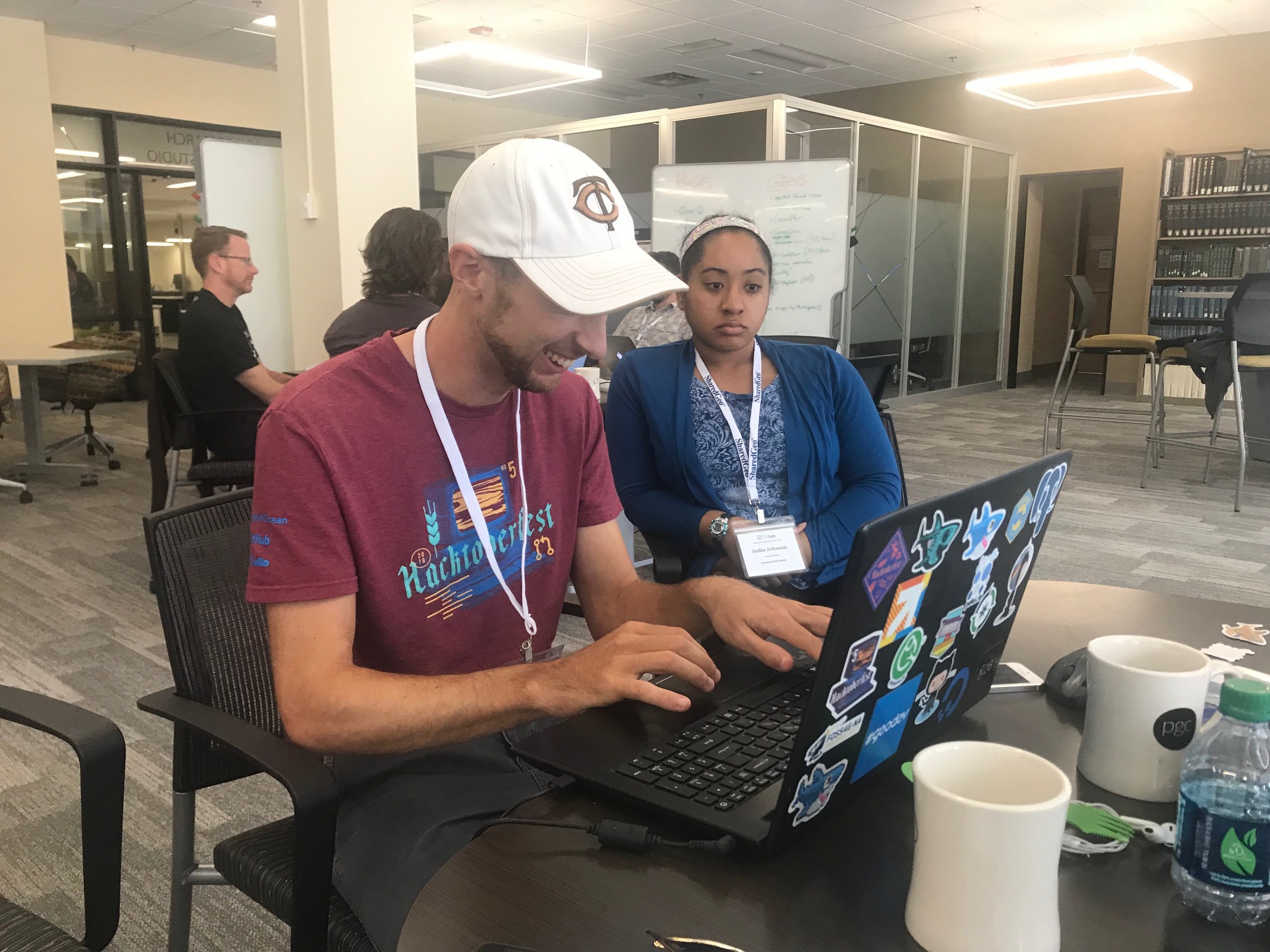

Piero Toffanin with parallel shells to set up multiple NodeODM instances

————————————————————————————————–

“Good evening, Mr. Briggs.”

The mission: To process 12,000 images over Dar es Salaam, Tanzania in 48 hours. Not 17 days. 2 days. To do this, we need 11 large machines (a primary node with 32 cores and 64GB RAM and 10 secondary nodes with 16 cores and 32GB RAM), and a way to distribute the tasks, align the tasks, and put everything back together. Something like this:

… just with a few more nodes.

Piero Toffanin and India Johnson working on testing ClusterODM

This is the second dataset to be tested on distributed split-merge, and the largest to be processed in OpenDroneMap to a fully merged state. Honestly, we don’t know what will happen: will the pieces process successfully and successfully stitch back together into a seamless dataset? Time will tell.

For the record, the parallel shells were merely for NodeODM machine setup.

Actually distributing the jobs? Easy: